- Keyword cannibalization occurs when multiple pages on a single domain target the same search intent, causing internal competition that dilutes ranking signals and wastes crawl budget.

- Establishing a master keyword repository is essential for mapping unique target queries to specific URLs and preventing semantic overlap during high-velocity content production.

- The 30% SERP overlap rule serves as a critical benchmark to determine whether two similar keywords require separate pages or should be consolidated into one comprehensive resource.

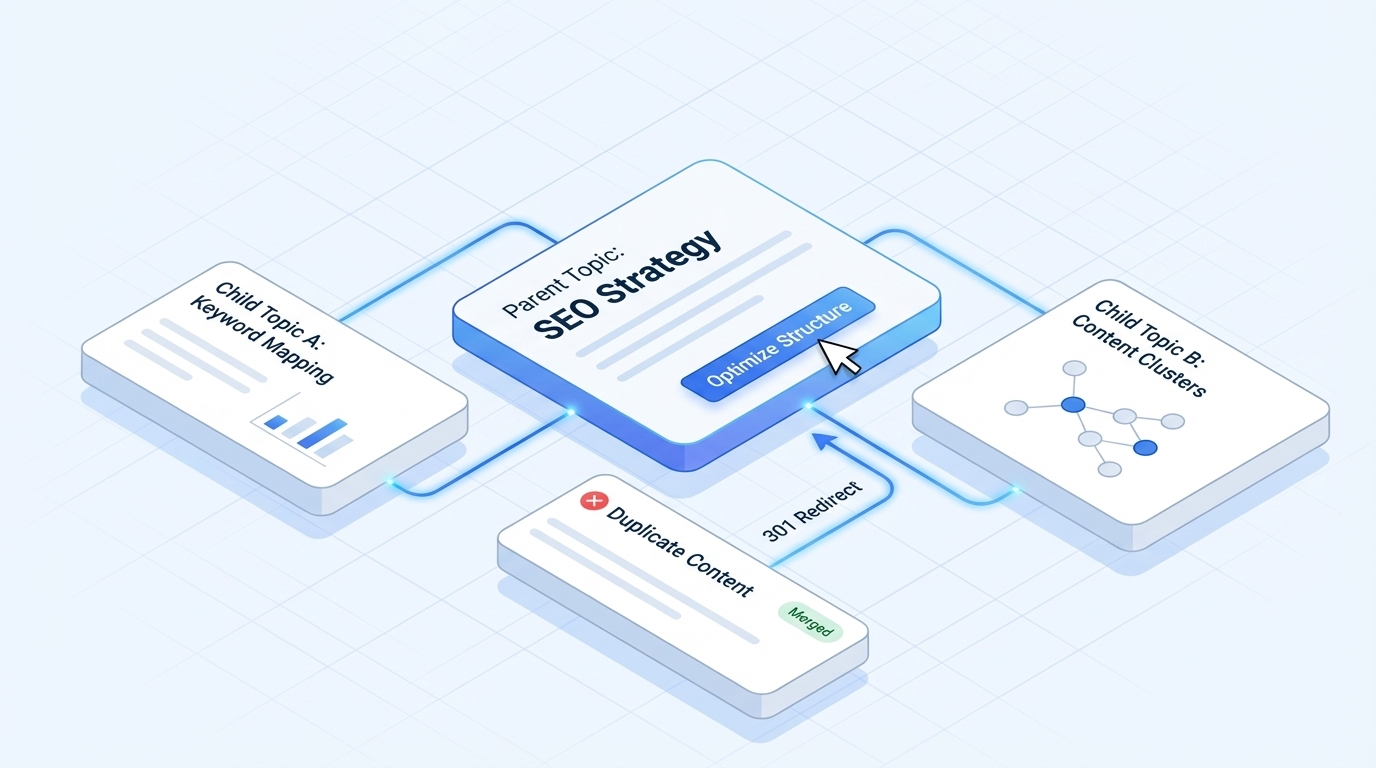

- Implementing a parent-child topic clustering framework creates a logical site architecture where pillar pages act as authoritative hubs that distribute link equity to specific long-tail articles.

- Resolving existing internal competition requires a framework to merge similar assets and use 301 redirects to consolidate the authority of several thin pages into a single high-performing URL.

Aggressive content expansion helps brands build topical authority and capture larger shares of organic search traffic. Publishing high-velocity schedules can accelerate growth but often introduces architectural decay to the site. Understanding the mechanics of these systems is the first step toward hardening your site against ranking signal dilution.

Internal conflict happens when multiple pages on a single domain target the same search intent. SEO managers must balance speed with architectural integrity to ensure every new URL adds value. Managing these complexities requires a proactive approach to keyword mapping and content strategy.

Understanding the Hidden Dangers of High-Velocity Content Production

Keyword cannibalization occurs when a website ends up competing against itself for rankings because two or more pages target the same set of keywords. During a rapid growth phase, the pressure to publish frequently often leads to overlapping topics that serve the same purpose. This internal competition prevents search engines from identifying the most authoritative page for a specific query.

The Definition of Keyword Cannibalization in Modern SEO

Modern keyword cannibalization is a technical issue where search engines struggle to choose the most relevant URL among several similar options. It isn't simply about having the same keyword appear on different pages of a site. It specifically refers to multiple pages that target the same search intent and feature a similar on-page structure.

Search engines have a limited crawl budget, which determines how often they visit your site and how many pages they scan. Crawl budget limits indexation speed. When two similar pages target the same query, they waste two points of this crawl budget instead of one. This inefficiency can slow down the indexation of new content across the rest of your domain.

This phenomenon also affects how link equity is distributed throughout your site architecture. If external sites link to several different pages that all serve the same intent, the ranking power of those backlinks is divided. Consolidating these signals into one primary URL ensures the page has the authority to compete in search results.

The Financial and Performance Costs of Internal Competition

The financial impact of keyword cannibalization is evident in lost conversion potential and inefficient marketing spend. When content teams fight among themselves, they waste hours of production time on redundant assets. One SEO agency recorded a 110% uplift in organic sessions after consolidating efforts on a real estate website.

Resources are also wasted when two internal pages compete for the same position. If you have two pages, each with three high-quality backlinks, their combined value is lower than that of a single page with six high-quality backlinks. This split in authority keeps both pages from reaching their full potential and limits the overall return on investment for your SEO articles.

Cannibalization can frustrate users and hurt your brand's reputation. If a user searches for a product and lands on an informational blog post instead of a category page, they may leave your site. This mismatch between intent and content leads to higher bounce rates and lower click-through rates across your most important keywords.

Why Aggressive Expansion Increases Cannibalization Risks

Scaling content production naturally increases the risk of architectural decay. As a content library grows into thousands of pages, the nuances between long-tail keywords become thin. This makes it easier for strategists to assign overlapping topics to different writers accidentally.

The Problem of Semantic Overlap and Topic Thinness

Modern search engines have shifted from simple keyword matching to intent-based SEO. They group similar terms and synonyms under a single intent umbrella to provide a better user experience. If you target two keywords that seem different but serve the same user goal, you'll likely encounter ranking instability.

Search engines might even occasionally switch the rankings of your pages as they test which one performs better. This constant flipping makes it challenging for your primary page to reach the top and stay there consistently. It's a sign that the search engine sees both pages as roughly equally relevant but cannot decide which to prioritize.

Thin differentiation is the primary driver of this problem during rapid expansion phases. When a team is tasked with high-velocity publishing schedules, they may create multiple pages that differ only slightly. This lack of distinct value prevents any single page from standing out as the definitive resource on the topic.

Targeting many keywords with very similar meanings can confuse your website's topical map. Each page should serve a unique purpose that justifies its existence as a separate URL. If a new page doesn't offer a fresh perspective or unique data, it should probably be integrated into an existing piece of content.

Topic Fatigue and the Struggle for Originality

Content teams often run out of unique angles when forced to publish high volumes within a narrow niche. This leads to topic fatigue, where the same information is repeated across different blog posts with only slight changes. Such repetition results in content clusters that lack distinct value propositions for the reader.

Repetitive content can occur when companies use a small content database to generate pages programmatically. For example, real estate websites often have indexable category pages for different locations that contain no unique text. This lack of originality makes it difficult for search engines to distinguish between various pages on the site.

Maintaining a unique editorial voice is necessary for every new URL added to the library. Every piece of content should have a clear reason for being published that isn't already covered elsewhere. If your team cannot find a new angle for a topic, it's a sign that existing assets may fully cover the niche.

Intent Fragmentation vs. Keyword Cannibalization

It's important to distinguish between intent fragmentation and actual cannibalization within a large content library. Intent fragmentation occurs when a single keyword has multiple meanings, requiring different types of content to satisfy each one. Cannibalization happens when you produce multiple pages for the same meaning, causing them to compete for the same SERP real estate.

A brand might target the keyword "Apple" with a page about fruit and another about consumer technology. These pages don't cannibalize each other because they serve entirely different user needs. However, if you have two separate blog posts titled "Best budget laptops 2024" and "Top cheap laptops 2024," you're creating internal competition. These pages serve the same intent and will likely confuse search algorithms regarding which URL to prioritize.

Identifying where intent overlaps requires a deep dive into how users perceive specific queries. You should group keywords by their underlying goal rather than their literal phrasing. This practice ensures your site architecture remains lean and every page serves a specific, non-competitive purpose in your library.

Building a Preventive Keyword Mapping Framework

Effective prevention of keyword cannibalization requires a blend of editorial oversight and automated monitoring tools. A proactive strategy involves using a structured mapping framework to organize your targets long before writing begins. A well-organized content calendar acts as your first line of defense against creating a competitive site architecture.

Establishing a Master Keyword Repository

A master keyword repository is a central database that tracks every keyword your brand targets. This repository should include the primary keyword, the associated URL, and the page's core intent. Maintaining a central database enables distinct URL mapping, with every target query anchored to a specific, permanent address on the site.

To identify potential conflicts, you should research the main queries visitors use to find your existing pages. Before creating new content, check whether the proposed topic is likely to compete with pages that already live on your site. This simple step ensures every new piece of content expands your reach rather than overlapping with current rankings.

Your repository should also track secondary keywords and the date each page was last updated. Keeping this data in one place allows you to see the gaps in your SaaS content strategy more clearly. It also helps you identify older pages that might need refreshing or merging with newer assets.

Assigning Primary Intent to Each Target URL

Categorizing content by intent is one of the most effective ways to avoid internal competition. You can target a specific keyword on multiple pages if the search intent is strictly different. For example, a travel guide can target a location for informational intent while a hotel listing page targets the same location for transactional intent.

Apple provides a great example of this by occupying multiple top organic results for branded queries like MacBook Pro 13-inch. Their first result focuses on key product features, while the second allows shoppers to start the checkout process. The third result provides in-depth technical specifications for users seeking detailed data.

Two pages can coexist for the same broad topic if they address different stages of the buyer's journey. A how-to guide is designed for people in the awareness stage, while a product comparison page serves those in the consideration stage. Clearly defining these roles ensures search engines and users understand the unique purpose of each URL.

Leveraging SERP Overlap Analysis to Differentiate Content

Analyzing search engine results pages is a reliable way to validate your content plan. This technical method helps you determine if two keywords are distinct enough to warrant separate pages on your domain. If results for two different queries are nearly identical, search engines likely view them as the same topic.

Using the 30% Overlap Rule for Content Planning

The 30% overlap rule is a critical benchmark for SEO strategists. If more than 30% of the URLs in the top 10 results are the same for two keywords, those keywords should be targeted on one page. This indicates Google sees the intent of those two queries as fundamentally the same.

You can perform a SERP overlap analysis manually by opening two browser tabs and comparing the search results side by side. Record the URLs that appear in both results in a spreadsheet to calculate the percentage of commonality. Using this data-driven approach prevents you from wasting time on pages that will ultimately compete with each other.

Following this rule helps maintain a lean and efficient site structure. It forces you to consolidate similar ideas into comprehensive guides that are more likely to rank well. This strategy also simplifies your internal linking by creating a single, authoritative destination for a specific subject.

Deconstructing Competitor SERPs for Content Gaps

Looking at top-ranking pages for your target keywords can reveal what information is currently missing. Differentiation is not just about the keywords you use but also about the specific subtopics you cover. You should aim to provide a unique perspective that your competitors have ignored in their own content.

The People Also Ask sections and related searches are excellent tools for finding these unique angles. These sections highlight the specific questions that users have about a topic. By answering these questions in a way your competitors haven't, you create a distinct value proposition for your page.

Deconstructing the SERP also helps you understand the preferred format for a specific query. If all the top results are lists, creating a long-form narrative might not satisfy user intent. Aligning your format with user expectations while providing unique data is a smart way to stand out.

Implementing Parent-Child Topic Clustering for Architectural Clarity

Implementing a parent-child topic-clustering strategy creates a logical hierarchy that prevents subtopics from competing with the main pillar page. This hierarchy defines the relationship between broad overview pages and specific sub-pages. A clear internal linking structure reinforces these relationships and prevents pages from competing for the same rankings.

The Role of Pillar Content in Defining Broad Themes

Pillar pages should target high-volume, broad-intent keywords to serve as the main resource for a general topic. These pages are comprehensive and act as a distribution hub for traffic to more specific articles. Pillar pages organize cluster content, signaling their authority to search engines through depth and breadth.

Creating cornerstone content helps define your site's content hierarchy from the top down. Each pillar page should link out to several cluster pages that explore narrower subtopics in greater detail. This organization makes it easier for search engines to understand your knowledge in a particular niche.

Pillar content should remain evergreen and serve as the foundation of your content library. It provides the general context that allows your more specific, long-tail pages to rank for niche queries. When your site is organized this way, you can dominate search engine results pages for the core keyword.

Managing Internal Link Silos and Equity Flow

Internal links are among the most powerful tools for guiding both Google and visitors to your most important pages. You can use these links to signal which page is the strongest competitor for a specific topic. If multiple pages are optimized for the same keyword, search engines will struggle to identify the priority page without clear linking signals.

Child pages should always link back to their parent pillar page using descriptive anchor text. Descriptive anchor text signals page relevance to search engine crawlers. You should prioritize internal links to pages where you're the strongest competitor or where you earn the most money.

Avoid the danger of cross-linking between competing topics, as this can blur the lines of your site architecture. Every link should have a clear purpose in helping the user find more relevant information. Unorganized internal linking can actually create cannibalization issues by making different pages seem equally important for the same query.

You can also use internal links to resolve existing cannibalization problems. By changing the anchor text of links pointing to a competing page, you can shift ranking signals toward your preferred URL. This tactical use of link equity is a significant part of maintaining a healthy and high-performing website.

The Role of Detailed Content Briefs in Large-Scale Production

Detailed content briefs serve as essential guardrails during large-scale content production cycles. A brief should do more than list keywords; it should explicitly define the topic's boundaries to prevent overlap. By listing forbidden keywords or related URLs, you ensure writers don't accidentally cover ground already claimed by existing assets.

Briefs should also specify the target search intent and the new page's unique value proposition. If the writer knows exactly which questions they're responsible for answering, they're less likely to wander into another page's territory. This level of granular planning is what keeps a high-velocity publishing schedule from descending into architectural chaos.

Standardizing these briefs across your entire team helps maintain technical accuracy and consistency. When every writer follows the same structural requirements, the resulting content library feels like a cohesive whole. It ensures that every new piece of content fits perfectly into your existing topical map without causing friction.

Managing Programmatic SEO Risks

Programmatic SEO allows brands to generate thousands of pages quickly, but it carries a high risk of keyword cannibalization. This often happens when pages use a small content database to create multiple locations or category entries. Without unique data points, search engines may see these pages as duplicate versions of the same entity.

To mitigate these risks, you must inject unique, high-value data into every programmatically generated page. For a real estate site, this might include specific local market statistics, school district data, or neighborhood-specific crime rates. These distinct details transform a generic template into a unique resource that satisfies a specific, localized search intent.

Maintaining original editorial content alongside your database-driven facts is also necessary for long-term success. Even if most of the page is automated, including a unique introductory paragraph can signal originality to search engines. This balance allows you to scale your reach without triggering the negative effects of thin or repetitive content.

Technical Strategies for Distinguishing Similar Long-Tail Variations

As a content library scales into the thousands of pages, manual mapping becomes increasingly difficult. Advanced technical methods are required to ensure that very similar topics don't conflict in search results. These strategies focus on segmenting your audience and creating clear boundaries between pages.

Geographic and Demographic Segmentation

Differentiating content by tailoring it to specific locations or user personas is an effective way to avoid overlap. A page titled "SEO for lawyers" and another titled "SEO for dentists" serve different audience segments. Even if the core principles are the same, the specific examples and terminology create distinct targets.

To ensure search engines recognize these as unique pages, you must modify the on-page signals. This includes using specific headers, meta descriptions, and localized keywords that reflect the unique needs of each group. When content is tailored to a specific demographic, it satisfies search intent that a general page cannot.

This approach allows you to capture a variety of long-tail traffic without creating internal competition. Each page acts as a specialist resource for its intended audience. By focusing on the unique pain points of different segments, you provide more value than a one-size-fits-all article.

Using Format-Based Distinctions to Avoid Conflict

Different content formats can help distinguish pages that target similar themes. A guide titled "Keyword Research Guide" serves a different user need than a "Keyword Research Template." Both can rank on your site because they offer different utilities to the searcher.

You should use headers, meta titles, and structured data to signal these format differences to Google. Clear labeling helps the search engine understand that one page is informational while the other is a tool. This clarity prevents the two pages from competing for the same spot in the SERP.

Other formats, such as case studies, checklists, or ultimate guides, also offer unique ways to cover a topic. When you plan your expansion, consider which format best suits the user's current stage in the buying process. Using a variety of formats makes your content library more useful and reduces the likelihood of semantic overlap.

Advanced Internal Linking Audits for Large Sites

Conducting advanced internal linking audits is a necessity for maintaining a healthy architecture as your site grows. A comprehensive audit uses crawl maps to visualize how pages are connected and how authority flows through your domain. These maps often reveal orphan pages that are cannibalizing traffic from your main pillar guides without providing any support.

During the audit, you should identify pages with high impression counts but low click-through rates. This often suggests that search engines are serving a page that doesn't perfectly match the user's intent. By adjusting the internal links pointing to these pages, you can guide Google toward the more appropriate, high-converting URL.

Regularly reviewing your linking structure helps you catch unorganized patterns before they impact your rankings. You can use these insights to refine your topic silos and ensure that link equity reaches your most profitable content. A well-audited site remains competitive even when publishing at a high-velocity pace.

Conducting a Content Library Audit Before Your Next Growth Phase

Regular content library auditing is the only way for high-growth brands to catch semantic overlap before it dilutes the site's authority. It's impossible to expand cleanly if your current foundation is already cluttered with overlapping pages. High-growth brands should treat auditing as a continuous process rather than a one-time task.

Identifying Existing Conflicts with Search Console

Google Search Console is an excellent tool for identifying keywords for which multiple URLs are receiving impressions and clicks. You can analyze the performance tab for a specific query to see if the ranking URL is constantly swapping. This flipping behavior indicates that Google is confused by your site's content.

Identifying keyword cannibalization involves searching for all content on a particular topic using site searches or third-party tools. Once you have a list of similar pages, you can compare their performance data to see which is strongest. This analysis helps you prioritize which conflicts to resolve to improve your content campaign's profitability.

Look for pages that have a high number of impressions but very low click-through rates. This often happens when the wrong page is ranking for a keyword, leading to a mismatch in user intent. By identifying these performance gaps, you can make informed decisions about how to reorganize your content.

The "Merge, Delete, or Redirect" Decision Framework

Once cannibalization is identified, you must decide how to resolve it. Consolidating content often means combining several thin pages into a single power page. This improves the user experience by reducing confusion and simplifying your domain's internal linking structure.

Merging three separate articles on an SEO audit into a single guide often leads to better rankings for a wider range of keywords. When you consolidate, make sure to implement 301 redirects from the old URLs to the new ones. Google typically removes the redirected URLs from its index within a few weeks, though you should keep them active for at least a year.

Page deletion is generally not recommended for cannibalization because it can lead to the loss of valuable backlinks. If you delete a page that has authority, you risk losing topical relevance and causing 404 errors. It's better to redirect that authority to a relevant existing page to preserve your site's ranking power.

Page deindexation is another option, but it only masks the problem from search engines rather than solving it. It doesn't address the underlying issues of duplicate content or wasted crawl budget. Following a clear framework of merging and redirecting is the most effective way to strengthen your site's architecture.

The Impact of AI Overviews on Keyword Competition

The rise of AI-generated summaries in search results is changing the way users interact with the SERP. Google's AI models often synthesize information from multiple sources to provide a single, comprehensive answer to a query. In this environment, having multiple competing pages for the same intent makes it harder for the AI to cite your brand as the primary authority.

Consolidating your content into definitive, high-authority guides increases your chances of appearing in these AI overviews. The algorithm prefers clear, well-structured sources that provide exhaustive information on a topic. If your knowledge is fragmented across several thin pages, you're less likely to be chosen as the definitive answer.

To compete in an AI-driven landscape, you must focus on creating unique value and deep topical coverage. This shift makes it more important than ever to resolve cannibalization issues and maintain a clean site architecture. Brands that prioritize quality over quantity will be better positioned to dominate these new search features.

User Experience Metrics as Cannibalization Signals

User experience metrics such as bounce rate and dwell time are leading indicators of keyword cannibalization. When search engines serve the wrong page for a query, users often realize the mismatch immediately and return to the results page. This negative interaction signals to the algorithm that the page isn't satisfying the search intent.

Monitoring these metrics allows you to catch cannibalization issues that might not be obvious through ranking data alone. If a page ranks well but has an unusually high bounce rate, it might be competing for the wrong intent. Investigating these discrepancies helps you identify where your keyword mapping has failed.

By resolving these intent mismatches, you improve both your search performance and your overall brand reputation. Users appreciate finding exactly what they're looking for without having to navigate through irrelevant content. Focusing on user experience ensures your expansion strategy drives real business results rather than just empty traffic.

Monitoring and Maintaining Site Health During Rapid Publishing Sprints

Preventing keyword cannibalization is an ongoing task that requires constant monitoring as the search landscape changes. As your site grows, new overlaps can emerge even in a well-planned library. High-velocity teams must use automated reporting to stay ahead of potential issues and conduct regular competitor gap analyses.

Setting Up Automated Alerting Systems

SEO software can be configured to send alerts for significant ranking fluctuations or competing URL reports. These tools save time by highlighting potential conflicts before they lead to a significant loss of organic traffic. Integrating these alerts into your team's workflow ensures technical health is always a priority.

Setting up automated checks allows you to maintain a high publishing velocity without sacrificing quality control. When an alert is triggered, your team can quickly investigate the cause and determine if a new page is competing with an old one. This reactive capability is a necessary complement to your proactive mapping framework.

Regular reporting should be a standard part of your content management process. By reviewing site health metrics monthly, you can identify trends in how your content is being indexed. This visibility helps you refine your expansion strategy and ensure every new asset contributes to your growth.

Rank Tracking for Multiple URLs per Keyword

It's important to track more than just your top ranking URL for primary keywords. Seeing if your site occupies multiple spots in the SERP provides insights into your topical dominance. If those spots are occupied by the correct pages for different intents, it's a sign of a healthy strategy.

However, if multiple pages are ranking and then disappearing, it's a sign of impending cannibalization. Tracking the stability of your rankings helps you differentiate between intentional dominance and accidental competition. Double rankings are only a benefit if both pages satisfy unique user needs and drive conversions.

Interpreting this data allows you to fine-tune your internal linking and on-page optimization. If the wrong page keeps ranking for a high-value keyword, you may need to revive your decaying content to better align with the intent. Constant monitoring ensures your site architecture remains optimized for both users and search engines.

Scale Your Reach with Automated SEO Content Execution

Aggressive content expansion is only effective when it's built on a foundation of unique intent and clear site architecture. Successful SEO strategies must balance the need for high-velocity production with meticulous keyword mapping and regular SERP overlap analysis. By prioritizing distinct value for every URL and using parent-child clustering to organize your assets, you can avoid the internal competition that hinders organic growth.

Auditing your existing content and monitoring site health are necessary steps for any high-growth brand. Consolidating thin pages and managing internal link equity ensures your ranking signals remain strong and focused. When every piece of content serves a unique purpose, your website becomes a more authoritative and reliable resource for your target audience. This clarity is essential for surviving the shifts in AI-driven search and maintaining long-term visibility.

We specialize in helping brands scale their content libraries without risking keyword cannibalization. Our SaaS platform and professional services ensure that every piece of content is ready to publish, unique, and strategically aligned with your existing site architecture. Schedule a demo today to see how Brand Voice can help you publish high-quality SEO content at scale and drive real results for your business.