- Google integrated helpful content signals into its core ranking algorithm in March 2024, shifting to a continuous evaluation process that prioritizes people-first content over search-engine-first material.

- The Google classifier utilizes sitewide signals to determine domain helpfulness, meaning that low-value content can suppress the visibility of higher-quality assets across an entire website.

- Establishing topical authority requires demonstrating E-E-A-T by incorporating first-hand experience, original data, and unique human insights that generic AI tools cannot replicate.

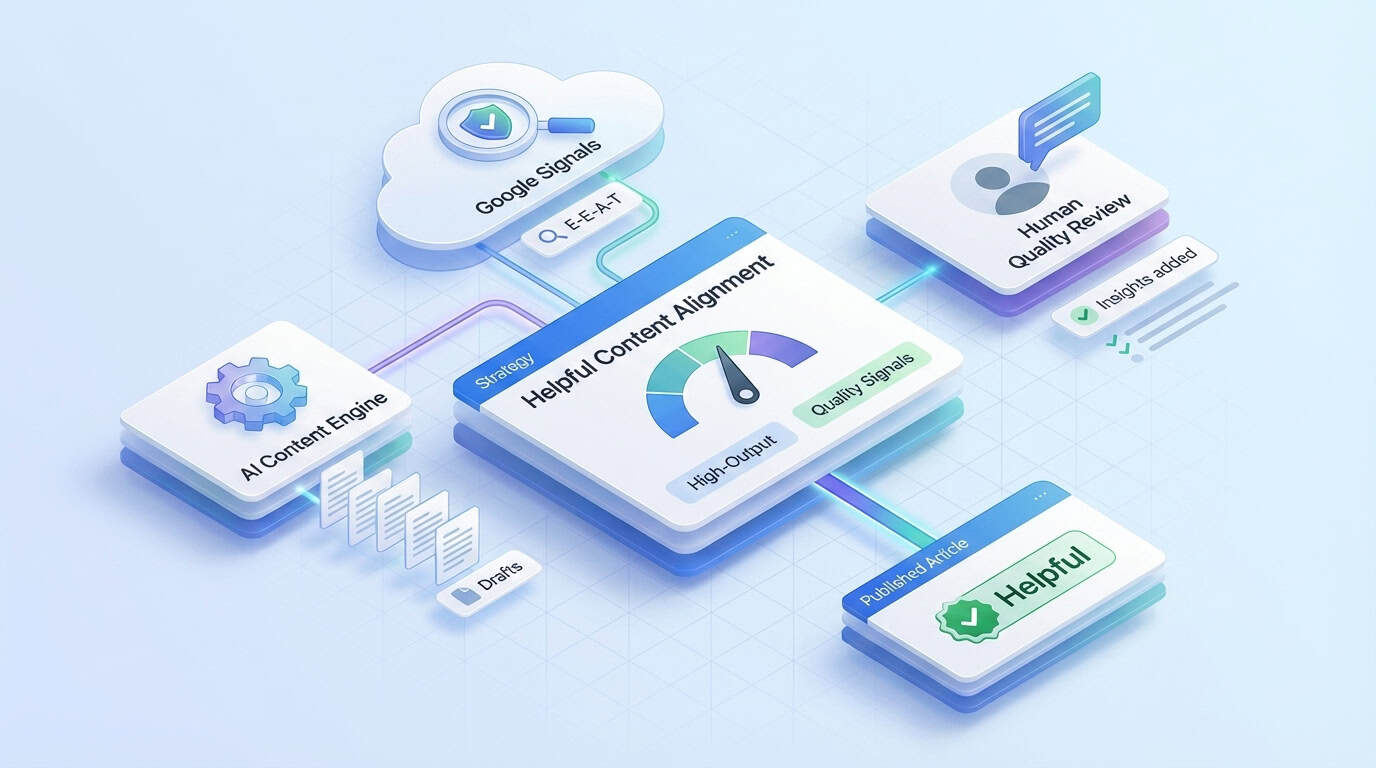

- Successful high-output strategies must utilize an AI-human hybrid workflow and editorial scorecards to ensure all published articles meet search quality rater guidelines and satisfy user intent.

Scaling content production against machine-learning ranking systems like RankBrain and SpamBrain requires a precise, high-stakes strategy. You need a high-frequency publishing schedule of more than 30 articles per month to establish topical authority and capture market share. However, you can't afford to trigger the algorithmic tripwires that detect low-effort fluff. Google's current systems have evolved to prioritize depth, making the traditional approach a dangerous gamble unless it's anchored in quality.

The tension between quantity and quality has never been more visible in search results. While high output is a necessary component of modern growth, it must align perfectly with the Google helpful content system to remain effective. Success requires a strategy in which every single page serves a distinct purpose for the reader, rather than just occupying space on a crawl budget. Understanding the mechanics of these systems is the first step toward hardening your content portfolio against algorithmic shifts.

What is the Google Helpful Content System and Why Does It Matter for Scale?

Building a resilient content engine starts with understanding the rationale behind Google's algorithmic shifts. Modern content production serves two audiences simultaneously: human readers and a machine-learning system that is increasingly adept at mimicking human satisfaction. The core ranking system determines which brands survive a core update and which ones lose their visibility overnight.

The Transition from the Helpful Content Update to Core Algorithms

The landscape shifted significantly when Google launched the Helpful Content Update in August 2022. The August 2022 Helpful Content Update represented a fundamental shift in ranking strategy rather than a minor adjustment to ranking factors. It was a fundamental effort to support people-first content across the web. For the first time, the search engine had a dedicated mechanism to identify and suppress SEO articles that seemed to have been written by bots for bots.

The stakes grew even higher on March 5, 2024, when Google folded these helpful content signals directly into its core ranking algorithm. Merging these signals into the core ranking algorithm on March 5, 2024, transformed helpfulness from a periodic check into a continuous evaluation process. Now, the quality of your output is evaluated every time crawlers visit your site. There is no need to wait for a specific future update to recover lost rankings.

The integration of these signals signifies that the helpful classifier is always active and constantly learning from user behavior and content patterns. For high-output brands, this means there's no room for a launch-and-forget mentality. You're operating in an environment where the bar for entry is perpetually rising. The algorithms are designed to filter out anything that doesn't provide genuine value.

Understanding Sitewide Signals and Their Impact on High-Output Strategies

One of the most critical aspects of this system is its use of sitewide ranking signals. Google uses an automated machine-learning classifier to scan a website's overall content and determine its overall helpfulness. The core algorithm evaluates pages within the context of the entire domain rather than in isolation. This shift means it judges the quality of the surrounding content neighborhood.

If too many pages on your site are flagged as low-value or unhelpful, your entire domain's visibility could suffer. Even your most comprehensive guides, with original data and expert citations, might drop in rankings because the dead weight of thin content drags them down. It's a weighted system where the presence of search-engine-first content creates a toxic environment for your top-tier assets. You must treat every URL as a representative of your brand's total quality.

Scaling requires a clean portfolio to avoid such algorithmic suppression. You can't hide a few hundred mediocre landing pages behind a dozen great blog posts. Every piece of content you publish either contributes to your domain's authority or acts as a potential liability.

Differentiating Between People-First Content and Search-Engine-First Content

Transitioning to a people-first strategy requires a fundamental psychological shift within your content team. You have to stop asking what keywords will rank and start asking what the reader actually needs to know. This change in perspective is what separates sustainable growth from short-term traffic spikes that eventually collapse under scrutiny.

The Dangers of Producing Content Solely for Search Rankings

The primary indicator of search-engine-first content is a focus on manipulation rather than education. This often involves excessive automation without human oversight, resulting in syntactically redundant, low-information-density prose. When you write on topics solely because they're trending, without having anything unique to add, you're essentially creating noise. Fostering an editorial culture that rejects search-engine-first tactics requires a commitment to answering questions that keyword tools might not yet identify.

Content mills often fall into the trap of writing for word counts and keyword densities rather than user clarity. If a reader finishes your article and immediately has to go back to the search results to find a better answer, you've failed the helpfulness test. Google tracks these behavioral signals regularly. A high bounce rate back to the search results is a loud indicator that your content didn't satisfy the intent.

Producing content solely for rankings is a race to the bottom that ignores the user's journey. It relies on tricks and shortcuts that might have worked five years ago but are now easily detected by modern classifiers. Scaling this type of content is effectively scaling your own obsolescence. You are building a foundation on shifting sands that Google will eventually wash away.

Identifying the Characteristics of Truly Helpful Informational Content

Truly helpful content is defined by its ability to provide a satisfying experience that leaves the user feeling informed. It serves a real audience need rather than just trying to grab search traffic through generic summaries. According to Google's self-assessment questions, helpfulness is rooted in original information, comprehensive reporting, and substantial value that set it apart from other pages.

Added value can take many forms, such as unique data sets, expert interviews, or proprietary insights gained through real-world experience. When you produce an article that offers a perspective the reader can't find anywhere else, you're building topical authority that algorithms can recognize. Providing this level of depth ensures the content is more than just a restatement of information already found on the first page of search results.

At its core, people-first content satisfies a clear, user-focused purpose. It guides the reader through a problem or teaches them a new skill with clarity and precision. By focusing on these metrics, high-output teams can ensure that their volume actually translates into long-term trust and recurring traffic. High-output strategies require human editorial oversight to maintain these standards at scale.

Maximizing Information Gain Through Unique Data and Perspectives

Google's recent updates emphasize the importance of information gain. This refers to the new information a document provides compared to other documents a user has already seen on the same topic. To excel, every high-output article must offer a "delta" of value that justifies its existence in the search results.

You can achieve this by including proprietary survey data, contrasting viewpoints from industry experts, or a deep-dive analysis that goes beyond the "top 10" lists commonly found in search results. By consistently providing information unavailable elsewhere, you solidify your site as an essential node in the broader Knowledge Graph.

Leveraging Quality Raters Guidelines to Inform Your Content Strategy

The Search Quality Rater Guidelines serve as a master rubric for what Google considers high-quality. While human raters don't have a direct dial to turn your rankings up or down, their feedback is used to train the machine learning models that do. Treating these quality raters' guidelines as an internal quality control checklist is the smartest way to future-proof your strategy. Adopting this rubric allows you to align your writing with the same goals the algorithm is trying to achieve.

The Role of Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T)

The E-E-A-T framework is the cornerstone of how Google evaluates informational content today. While expertise and authoritativeness have always been important, the recent addition of experience has changed the game for high-volume producers. Google's helpful content system now places an unprecedented emphasis on first-hand, real-world involvement in a topic. First-hand experience builds topical authority by providing details that generic summaries cannot replicate.

Experience is the element that AI and generic writers often lack. It is the difference between someone explaining how a car engine works based on a manual and a mechanic describing the specific sound of a failing alternator. To rank in a world of commodified information, you must weave evidence of this first-hand involvement into every article you publish. Maintaining first-hand experience is critical for brands building topical authority in the semantic web and leveraging entities in the Knowledge Graph.

Expertise and authoritativeness build on that experience to create a profile of trustworthiness. Trust is earned when your content is consistently accurate, well-cited, and transparent about its origins. If your site lacks these elements, no amount of keyword optimization will save it from being classified as unhelpful. Trustworthiness stands as the most critical component of the E-E-A-T framework. It acts as the lens through which experience, expertise, and authoritativeness are validated.

Demonstrating E-E-A-T at scale is difficult but not impossible. It requires a commitment to sourcing information from real experts and ensuring that your editorial standards are rigorous. When you prioritize these quality signals, you're telling Google that your site is a reliable destination for users. Cultivating user trust remains the ultimate goal of the core algorithm.

Enhancing E-E-A-T Through Source Transparency and Disclosures

Helpfulness is intrinsically linked to transparency. Google prioritizes content that clearly identifies its sources and provides clear attribution for any external data used. This includes using schema markup to define authors and their specific expertise in a way that the Knowledge Graph can interpret.

Beyond basic citations, high-output brands should include editorial disclosures that explain the role of AI in their production process. By being upfront about how content is researched and verified, you build a layer of trust that automated systems reward as a positive signal of integrity.

How to Demonstrate First-Hand Knowledge in Every Piece of Content

High-output teams can show experience without necessarily slowing down production by integrating specific human markers. Content that aligns with the Google helpful content system is designed to solve problems for a specific audience rather than just gaming search rankings. Using case studies, personal anecdotes, or original photography can quickly prove to both the reader and the algorithm that you've actually engaged with the subject.

Unique perspectives and proprietary data are also excellent ways to distinguish your work from generic competitors. If you're writing about software, include screenshots of your own tests rather than using stock images. These small details serve as proof that generic AI-generated content can't replicate without human intervention. To help your team, you can implement a checklist for first-hand experience.

- Include original photography or unique screenshots of the process.

- Add quotes or insights from team members who have performed the task.

- Cite proprietary data, internal case studies, or authoritative outbound links to .gov and .edu sources to back up claims.

- Discuss specific challenges or edge cases encountered during the work.

- Incorporate specific entities and related concepts to improve semantic mapping and Knowledge Graph connectivity.

Ultimately, the human element is what makes content feel authentic to a reader. Whether it's a specific tone of voice or an opinionated take on an industry trend, these features provide the experience that Google is looking for. It is about being more than just a relay for information. Instead, you must become an active participant in the conversation.

Integrating User Experience Signals into Large-Scale Content Production

There's a significant bridge between editorial quality and technical performance that many content teams ignore. Google considers a page unhelpful if the user can't access the information due to intrusive ads or a confusing layout. You can't separate the words on the page from the environment in which they're read. High-growth brands must pay close attention to keyword cannibalization and content expansion to keep their user paths clear.

Beyond Core Web Vitals: Measuring Real User Satisfaction

While technical metrics like LCP and CLS are important, Google's system uses automated signals to evaluate whether content is genuinely helpful. Google uses a range of user experience signals to determine whether a page provides a frustrating or helpful environment. Behavioral signals, such as the absence of pogo-sticking, suggest that a user found what they were looking for. If users consistently land on your page and stay there to read, it's a strong signal of satisfaction.

Dwell time and meaningful interactions are better indicators of quality than simple click-through rates. A high-output strategy must account for these patterns by ensuring that the most important information is easy to find and engage with. If your content is buried under layers of unnecessary intro text, users will bounce, and Google will notice. The dependency of information access on page performance makes technical health and editorial value inseparable.

Measuring real user satisfaction requires looking at the post-click experience in detail. You should be analyzing where users drop off and whether they're taking the next logical step on your site. Content that satisfies a user's intent naturally leads to higher engagement and a more positive sitewide classification. This feedback loop is what drives long-term visibility in a competitive market.

The Importance of Page Layout and Accessibility in Content Performance

Page layout directly impacts the perceived helpfulness of your articles. If a reader is confronted with a wall of text or a barrage of pop-ups, they'll likely leave before they even read your first sentence. Clear headings, readable fonts, and a mobile-responsive design aren't just "nice-to-haves". They're functional requirements for helpful content in a mobile-first world.

Avoid excessive ads that distract from the main content or make it difficult to navigate. Google's algorithms are increasingly sensitive to layouts that prioritize monetization over the user experience. A clean, accessible page signals that you value the reader's time and attention. A clean, accessible page layout reinforces your status as a people-first publisher and keeps the domain in the good graces of the automated classifier.

Helpfulness is as much about delivery as it is about the words you choose. When you make your content easy to consume and navigate, you're reducing the friction between the user and the answer they need. A frictionless user experience is a core component in how Google determines which sites deserve top rankings. High-output teams should standardize their formatting to ensure every page meets these accessibility standards.

A Framework for Auditing Your Content Against People-First Content Metrics

An audit isn't a one-time event. It serves as a defensive measure against the constant volatility of search algorithms. In a high-output environment, you need a recurring process to ensure your existing library doesn't become a liability. A proactive approach to quality control keeps your sitewide signals healthy and your rankings stable. To excel, you need an enterprise content quality audit framework that everyone can follow.

Implementing an Internal Content Quality Scorecard

Creating an internal quality scorecard based on Google's self-assessment questions is the best way to standardize excellence. Your editors should use this rubric to grade every piece of content before it goes live. You should focus on measuring people-first content metrics like scroll depth and internal link click-through rates. If a post doesn't meet a minimum threshold, it shouldn't be published.

Originality checks ensure that the content isn't just a rehash of other sites. Depth measures whether the article truly covers the topic or skims the surface with generic platitudes. Clarity evaluates how easily the reader can understand the main takeaways and apply the information to their own situation. A standardized scorecard provides an objective framework that removes the guesswork from the editorial process.

Implementing a rubric allows you to scale without losing control over the nuances that make content helpful in Google's eyes. By holding every writer to the same high standard, you build a consistent brand voice that users and algorithms can trust. A scorecard helps your team move from subjective opinions to data-driven quality control.

Identifying and Removing Unhelpful Content from Your Portfolio

Content pruning is a necessary part of maintaining a healthy domain. Because unhelpful content can lower sitewide rankings, you must be willing to remove the dead weight dragging down your best pages. The pruning process involves identifying underperforming assets and deciding whether to delete, no-index, or rewrite them. Sites that build a content moat often do so by ruthlessly maintaining their quality standards.

The criteria for pruning should be based on engagement metrics and alignment with your current quality standards. If an old post is thin, outdated, or triggering negative user signals, it is better to remove it. Sometimes, a smaller, high-quality portfolio is much more powerful than a massive, mediocre one. You can use a decision matrix to simplify this process.

- Delete pages with zero traffic, zero backlinks, and outdated information.

- Redirect thin pages to a more comprehensive pillar post on the same topic.

- Rewrite pages that have good backlinks but are no longer considered helpful by modern standards.

- No-index utility pages that are necessary for users but offer no search value.

Cleaning up your site signals to Google that you're committed to maintaining a high-value environment for users. It shows that you're actively monitoring your output and taking responsibility for the information you provide. The helpful content system is specifically designed to reward this level of consistent editorial oversight.

Case Study: Recovering from the March 2024 Core Update

Consider a hypothetical enterprise site focused on high output volume without editorial oversight. Before the March 2024 update, they published 200 articles per month using basic AI prompting. After the update, their organic traffic dropped by 65% across the entire domain. The automated classifier had flagged the site as unhelpful due to low information density.

Their recovery strategy involved a total content audit. They identified that 60% of their content was syntactically redundant. They removed 3,000 unhelpful pages and consolidated another 1,000 into deep, expert-led guides. They added first-hand experience markers to every remaining page and improved their page load speeds.

Within four months, their traffic began to recover. By the six-month mark, their overall visibility exceeded their pre-update levels. The case study results prove that sitewide signals are reversible if you commit to a people-first strategy. Such a recovery highlights the importance of quality over sheer quantity in the modern search landscape.

How to Scale Content Production Without SEO Penalties

Scaling to 50 or more articles per month requires a robust AI-human hybrid workflow. AI is a powerful tool for research and outlining, but it's a means to an end, not the end itself. Google does not penalize AI-generated content specifically. It penalizes unhelpful content regardless of how it was produced. The goal is to use technology to enhance your productivity while keeping a human firmly in the driver's seat.

Establishing Effective Review Loops for High-Volume Workflows

High-output strategies often fail when the human-to-AI ratio is too low. Without a robust editorial review process, you'll inevitably publish factual errors and repetitive prose. Maintaining a human-in-the-loop review cycle ensures you effectively balance AI content with Google E-E-A-T requirements.

Review loops should focus on fact-checking, tone alignment, and the addition of real-world experience markers. Editors need to be trained to look for the AI tell and replace generic sentences with punchy, opinionated insights. Strict editorial reviews ensure that every piece of content meets people-first criteria before indexing. It allows you to maintain a high velocity without sacrificing the soul of your brand.

Scale without oversight is a recipe for disaster in the current ranking environment. By investing in a strong editorial team, you're building a moat around your content strategy that competitors relying solely on automation can't cross. Quality is the only sustainable competitive advantage in a world of infinite, low-cost content.

Maintaining Brand Voice and Originality in the Age of Generative AI

Maintaining a consistent brand voice is essential when using AI to assist with your drafts. Google evaluates content produced by Large Language Models (LLMs) using the same criteria applied to human-generated material. If your output sounds like every other generic bot on the internet, you'll fail the helpfulness test. You must refine the raw material to ensure it reflects your unique perspective.

Use AI for the heavy lifting of research, outlining, and summarizing data. However, you must leave the analysis and opinion to your human experts. AI is great at facts but terrible at nuance and first-hand experience. By adding your own unique insights, you ensure that the final product feels authentic and valuable to your specific audience.

Originality is your best defense against algorithmic suppression. AI-generated drafts should be treated as raw material that needs to be polished and checked against your brand's unique perspective. A hybrid model allows for increased production volume without triggering negative signals from the helpful content classifier.

Future-Proofing Your Strategy with Semantic Density

The future of SEO is about semantic density. Google is no longer looking for keyword frequency but for how many related entities and concepts a single piece of content covers. A deeper understanding of entity relationships is required to navigate the shift toward semantic density. Brands that excel here often utilize pillar content services to map out their entire topical landscape.

The Impact of AI Overviews and SGE on Informational Content

Search Generative Experience (SGE) and AI Overviews are changing how users consume information. Google often provides a direct answer at the top of the search results, which can reduce click-through rates for simple queries. To survive, your content must offer more depth than a simple AI summary can. You must become the source that the AI cites for more detailed information.

Succeeding in AI-driven search requires moving away from surface-level answers toward complex explanations. If an AI can summarize your entire article in two sentences, your article lacks the density required for long-term success. Focus on providing original data, expert commentary, and unique perspectives that an LLM cannot generate on its own. Focusing on unique insights ensures your site remains a destination rather than a mere data point for a crawler.

The future of search belongs to those who can deliver high volumes of genuine value without taking shortcuts. Stay up to date with the Google Helpful Content system to ensure your strategy evolves with the platform. If you commit to being the most helpful source in your niche, the algorithms will eventually find you and reward you. It is a long game, but it is the only one worth playing for a lasting digital presence.

Future-Proof Your Content Engine with Brand Voice

Maintaining Google's helpful content signals while scaling production is a significant challenge, but these two goals are not mutually exclusive. The future of search belongs to brands that can deliver massive volumes of genuine, people-first value through a structured and quality-driven framework. By aligning your high-output strategy with E-E-A-T principles and a rigorous editorial process, you build a resilient domain that thrives even during major algorithmic shifts. Adhering to these quality standards forms the foundation of content marketing for SaaS and other high-growth industries.

We understand the technical and creative hurdles involved in producing ready-to-publish content that satisfies both users and search engines. Scaling without the right tools often leads to the exact unhelpful signals that trigger ranking drops and sitewide suppression. Our expertise is designed to eliminate these risks by providing content that's already optimized to meet the latest quality standards.

Brand Voice creates SEO-optimized, technically accurate articles that adhere to Google's people-first metrics while maintaining your unique brand voice. Our approach ensures you can drive traffic and engage your audience without the manual labor of building these complex workflows from scratch. Book a demo today to see how we can help you scale your content production and achieve real, sustainable results for your business.